One of the most common questions I have been asking to myself lately is what would be a good use case combining RPA and LLM as the term LLM (Large Language Models).

Lately, when we hear the term "LLM", we only think of ChatGPT. Even though the ChatGPT is based on LLM, it is not the only solution using an LLM.

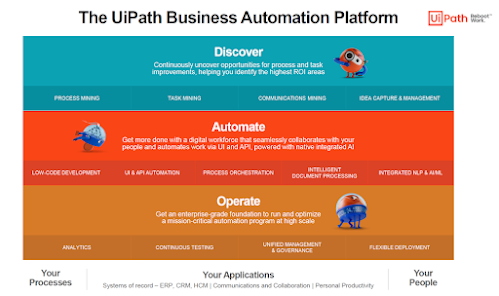

The Communications Mining at UiPath platform, for instance, is using a state-of-the-art LLM to automate the reading and understanding of business conversations. It uses an unsupervised learning for clustering and then with the help of the supervised learning, it helps, for instance, classifying unstructured data, like emails, tickets, etc. And it is not using ChatGPT !

But in my demo, I decided to use ChatGPT. I combined a chatbot, an unattended robot and the ChatGPT LLM and created the following simple automation.

I should also clearly mention that it is not applicable to the real world as it is now, but I hope, it can be a good base to more complex, real-world relevant automations.

Here comes the scenario, after which I will go into some technical details:

- A doctor meets a patient and writes down the symptoms based on the conversation.

- He never comes to the point to give the diagnosis to the patient.

- The patient then is referred to a chatbot to question his symptoms to get some diagnosis.

Let's have a look at the video below:

As the first step, the patient provides his account id and this

is verified by the unattended robot by querying the database, which is the

excel sheet. The robot then retrieves the symptoms based on the account id,

passes them to the ChatGPT via an API call and receives the response back with

the possible diagnosis and sends them back to the patient via the chatbot.

While doing that, it also sends an email with the same information to the

patient's email address which was also retrieved while querying the account id.

To me, what is the most fascinating is the possibility to use a front-end AI enabled chatbot, with an unattended bot and a back-end AI chatbot built on Generative Pre-trained Transformer language model. AI is a great technology, but it always needs a robot to make things done, like querying a database or sending an email with information and while building automations, this should always be taken into consideration. A brain always needs hands and feet to control !

Please share your ideas on how this simple case can be turned into a more productive one to create more value...